In my opinion by Amelia Bentley

I ran a simple experiment. Over the past few months, I asked ChatGPT the same question more than fifty times: Who are the ten most important historical figures of all time?

The response? Some variations of Jesus, Muhammad, Isaac Newton, Buddha, Confucius, Napoleon, Einstein, Alexander the Great, Genghis Khan, and Julius Caesar.

The one consistent factor…. It was always Ten men and zero women.

These are undeniably significant figures. But so are…

- Cleopatra, who ruled Egypt for more than two decades.

- Joan of Arc, who altered the course of a major European war.

- Queen Victoria, who presided over the largest empire in modern history.

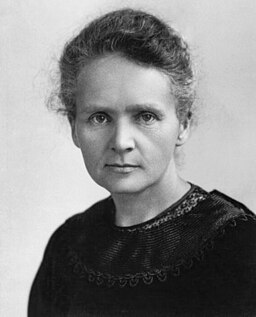

- Marie Curie, the only person in history to win Nobel Prizes in two scientific fields.

- Rosa Parks, whose actions helped catalyse a civil rights movement that reshaped U.S. law

Their absence does not highlight their lack of importance, but it does highlight who history has chosen to remember and idolise.

And that raises an uncomfortable question.

Attribution, Public domain, via Wikimedia Commons

Is ChatGPT Sexist?

AI systems like ChatGPT learn from massive amounts of textbooks, articles, websites, essentially the entire written internet. That’s a problem because history has largely been written by men, about men.

Slate found that nearly 76% of popular U.S. history books published last year were written by men. This reflects a much longer tradition that, until the 19th century, writing was not considered a profession suitable for women. Even once women were allowed to write, they were never equally represented. One large study of more than 1.5 million authors found that women made up just 27% of published writers over the past 60 years.

AI isn’t consciously deciding that women are less important. It’s reflecting the biases already in the data it was trained on.

How do we use AI without repeating old biases?

Stop treating AI like the source of truth.

When ChatGPT gives you a “list” or “the best answer,” that’s not the answer… It’s an answer, shaped by whatever data it learned from (including the gaps, blind spots, and dominant perspectives). Understanding that difference matters because it puts you back in the driver’s seat as the human doing the thinking.

Use your critical thinking to deliberately widen the lens.

If you know AI might miss people, especially women, Indigenous voices, disabled communities, and anyone outside the “default” story, you can choose to steer it.

Try prompts that force inclusion and surface what’s missing, like:

- “Give me 10 women who shaped this field”

- “Now redo that list with non-Western leaders”

- “What perspectives are missing from your answer? Who might be harmed if we accept it as-is?”

- “Give me three alternative viewpoints: a student, a frontline worker, and a community elder.”

AI doesn’t know what it’s missing, but you do. Your prompts can pull forward the stories that default training data tends to bury. That’s how we build a future where AI supports all people.

Resource references:

https://www.unwomen.org/en/articles/explainer/artificial-intelligence-and-gender-equality

https://www.britannica.com/biography/Saint-Joan-of-Arc

https://www.britannica.com/biography/Cleopatra-queen-of-Egypt

https://www.nobelprize.org/prizes/physics/1903/marie-curie/biographical/

https://www.womenshistory.org/education-resources/biographies/rosa-parks

https://www.britannica.com/biography/Victoria-queen-of-United-Kingdom/Relations-with-Peel

About Amelia

About the Author

Amelia Bentley is a contributing author for the AI Assembly and a Data & AI Scientist at HazardCo. Standing at the intersection of data science and psychology, Amelia is redefining how we interact with machines. Her work focuses on bridging the gap between complex code and human behavior, ensuring Kiwi businesses adopt new technologies with a people-first mindset.

A champion for diversity in tech, Amelia is an founding member of our HerAIStory community. She recently shared her expertise as a panelist at the Wellington HerAIStory event, helping to shape the narrative for women in AI.

Amelia's latest posts

I Asked ChatGPT to Name the Most Important People in History. Not a Single Woman Made the List

Home / In my opinion by Amelia Bentley I ran a simple experiment. Over the past few months, I asked ChatGPT the same question more than fifty times: Who are the ten most important historical figures of all time? The response? Some variations of Jesus, Muhammad, Isaac Newton, Buddha, Confucius,

What a Museum Exhibition Taught Me About How We Decide What’s True in the Age of AI

Home / In my opinion by Amelia Bentley I recently attended an exhibition at the State Library Victoria, Make Believe, which reminded me that misinformation isn’t a problem of the digital age but a feature of human thinking. The exhibition explored how psychology, cognitive shortcuts, and emotion shape what we

Why Aotearoa’s AI Future Needs Women

Home / In my opinion by Amelia Bentley The Times’ recent list of the 100 most influential people in AI included only 27 women. Of the 24 leaders they named, just two were women. Several of the most influential men in AI also appear on the Forbes 400 list, highlighting